On Thursday, June 11th, I will be discussing "Designing for Authentic Assessment: A Community Toolkit for Generative AI Integration in Computing Education." This session will discuss my progress on a research study examining how faculty integrate generative AI into computing education. This is just one of many conversations taking place at the Teaching and Leaning Symposium, sponsored by #EDUCAUSE. I hope to see you there! Learn more and register here: https://events.educause.edu/symposiums/2026/new-approaches-to-assessment-design-for-ai-enabled-learning

Making Thinking Visible in the Age of AI

In a previous post, I walked through Fink’s Taxonomy of Significant Learning and argued that the dimensions most resistant to AI offloading are Human Dimension, Caring, and Learning How to Learn. If you have not read that post yet, it is worth starting there. This post picks up where that one left off.

Knowing which dimensions to target is necessary. The harder question is this: once you have redesigned an assignment to require Integration, Caring or Learning How to Learn, how do you actually know whether the student’s thinking got there? How do you make that thinking visible, not just to yourself, but to the student?

Why Thinking Must Be Seen

Let me start with an honest admission. Long before AI arrived, most of us were already assessing products and hoping the thinking happened somewhere in the middle.

A student submits a system design proposal. We grade the proposal. But did the student genuinely wrestle with tradeoffs? Did they consider the user population? Did they revise their mental model partway through? We have no idea, because the process was invisible to us.

Bransford’s foundational work on how people learn keeps returning to the same finding: learning is the result of thinking, not the result of submitting. Students arrive with preconceptions already formed. If we do not actively engage those preconceptions, new information slides off. They perform for the test and revert to old models the moment the course ends.

AI has not created this problem. It has simply removed our excuse for not solving it.

When a student can generate a convincing network architecture diagram in thirty seconds, or produce a well-structured post-mortem without ever having reflected on anything, the gap between product and thinking becomes impossible to ignore. The question is no longer “did the student submit something good?” It is “did the student actually think?”

Eight Thinking Moves That Matter

Ron Ritchhart, Mark Church, and Karin Morrison spent years researching what happens in classrooms where deep learning consistently occurs. Their conclusion, documented in Making Thinking Visible, is that those classrooms share one quality: the teachers have found ways to make the thinking process explicit, observable, and routine.

They identify eight types of thinking that matter most in deep learning:

Observing closely and describing what is there

Building explanations and interpretations

Reasoning with evidence

Making connections

Considering different viewpoints and perspectives

Capturing the heart and forming conclusions

Wondering and asking questions

Uncovering complexity and going below the surface

Read that list through the lens of Fink’s dimensions. Making connections is Integration made visible. Wondering and asking questions is Learning How to Learn in action. Considering different viewpoints is Human Dimension surfacing in real time. Capturing the heart and forming conclusions is Caring given a concrete form.

🗂️

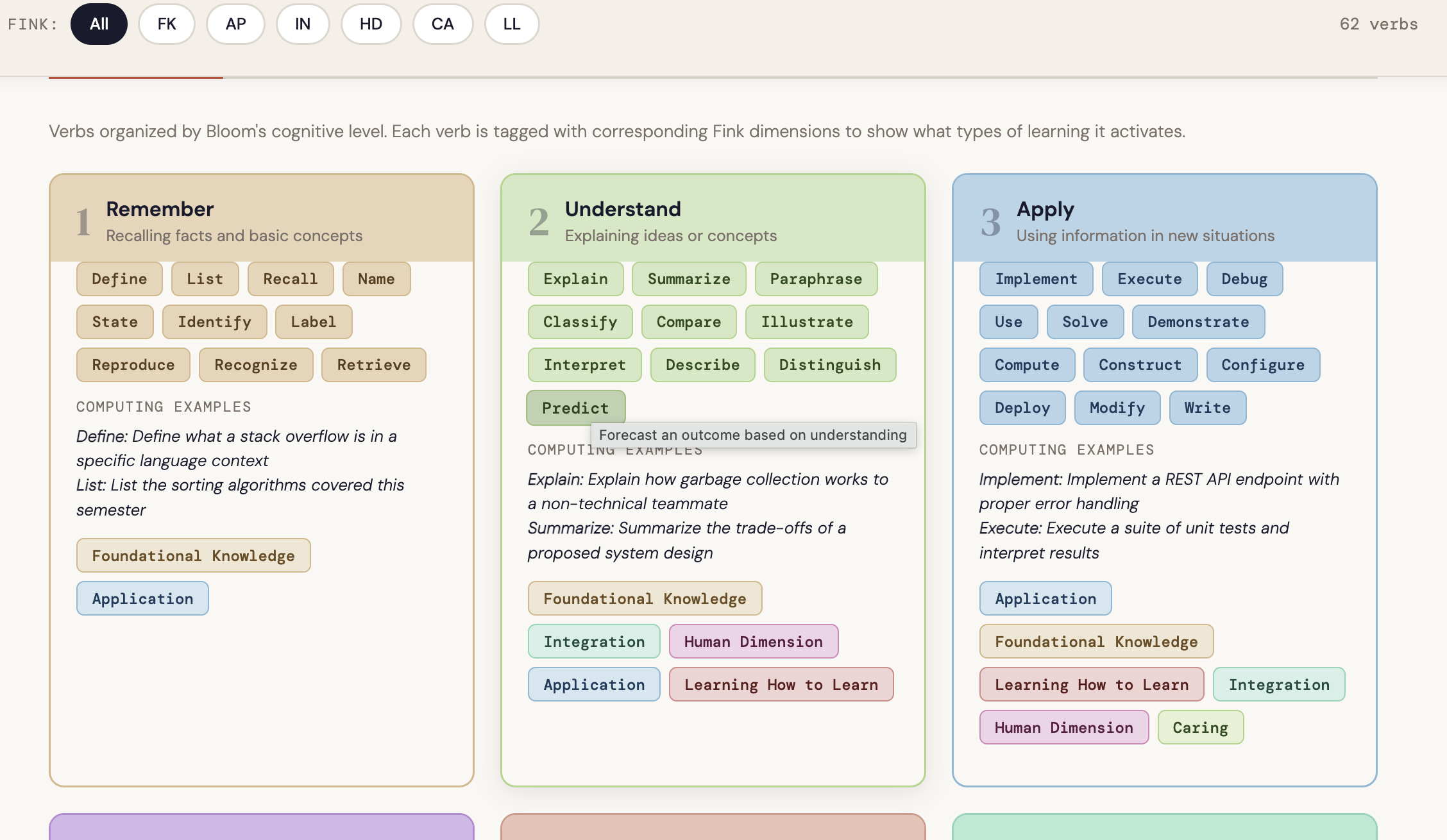

Interactive chart To see the full picture of how each thinking type maps to Fink’s dimensions, explore the Fink-Ritchhart Connection Chart. Hover over any card to see which dimensions a thinking type activates and why the connection exists. It is a practical audit tool to keep open when you are reviewing an assignment or selecting a routine.

→ Open the Fink-Ritchhart Connection Chart

Fink tells us which dimensions produce significant learning. Ritchhart gives us the thinking moves that actually get students there.

The bridge between them is the thinking routine: a short, structured, repeatable cognitive scaffold that makes the reasoning process visible to both the instructor and the student, before, during, or after an assignment. The key word is repeatable. A thinking routine used once is an activity. Used consistently, it becomes a habit of mind.

What It Looks Like in Practice

The most common objection I hear from computing faculty is that thinking routines feel like they belong in a humanities classroom. They do not. Here is what they look like in technical contexts, alongside what an AI-generated response to the same prompt would typically produce.

Connect-Extend-Challenge in a Cybersecurity Course

After students analyze a new class of vulnerabilities, rather than simply asking them to summarize what they learned, ask three questions: What connections do you see between this attack surface and techniques you have encountered before? How does it extend your mental model of how systems fail? What does it challenge in your current assumptions about secure design?

🎯 Fink dimension:Integration

What AI output looks like: A fluent, well-organized paragraph connecting the vulnerability class to common attack taxonomies, citing OWASP or MITRE ATT&CK — with no personal frame of reference and no indication of cognitive struggle or surprise.

What authentic visible thinking looks like: A response that names a specific system or course context the student is connecting to, identifies a genuine point of confusion or revision in their thinking, and asks a follow-up question they actually want answered.

Think-Puzzle-Explore Before a Systems Design Assignment

Before students begin a major design task — architecting a distributed data pipeline or specifying a real-time embedded system — ask them to spend ten minutes on three prompts: What do you already think you know about this problem space? What puzzles you about it? What would you want to explore before committing to a design direction?

🎯 Fink dimension:Learning How to Learn

What AI output looks likeA generic overview of the problem domain, a list of standard considerations (latency, fault tolerance, scalability), and a suggested exploration path drawn from documentation.

What authentic visible thinking looks likeIdiosyncratic puzzles specific to this student’s prior experience, honest uncertainty about where to start, and questions that reflect what they personally do not yet understand rather than what the internet says is hard.

I Used to Think / Now I Think at Project Completion

At the end of a software engineering project, before students submit their final documentation, ask them to complete two sentences: “I used to think [X] about [the problem, the technology, the team process]” and “Now I think [Y].” Require them to explain what changed their thinking.

🎯 Fink dimensions: Caring&Learning How to Learn

What AI output looks like: A polished reflection arc that describes growth in general terms, references course concepts correctly, and lands on a tidy conclusion about professional development.

What authentic visible thinking looks likeSomething specific — a named moment in the project where a decision backfired, a teammate conversation that reframed the problem, a line of code that revealed a misunderstanding the student did not know they had. Specificity is the signal.

Design Principle: Assess the Process

When you cannot assess the thinking directly, make the process the assessment.

This does not mean adding reflection questions as an afterthought. It means designing the assignment so that the thinking process is where the grade actually lives.

In an introductory programming course, this might mean asking students to annotate their code not with what it does but with why they made the choices they made. Not “initialize array here” but “I chose an array over a linked list because access patterns here are random and I wanted O(1) lookup, but I am not sure this holds if the input size grows.” That annotation is a window into reasoning that the code itself cannot provide.

In a networking or operating systems course, it might mean asking students to document their debugging process rather than just their solution. What did they try first? What did that tell them? What did they have to revise? The process log is where the learning lives. The solution is just evidence that the process concluded.

In a capstone or project course, it might mean maintaining a design decision log throughout the semester. Every significant choice — whether architecture, data model, technology selection, or tradeoff resolution — gets a brief written rationale. When students defend their work at the end of the semester, they are not reconstructing decisions from memory. They are curating a record of their own thinking.

AI and the Cost of Offloading

I want to name this clearly because it gets lost in conversations about academic integrity.

The concern with AI offloading is not primarily that students are cheating. It is that they are forfeiting the experiences that produce the outcomes we most care about.

A student who uses AI to generate their debugging rationale has not practiced the metacognitive regulation that Fink’s Learning How to Learn dimension is built on. They have not developed the habit of monitoring their own understanding and adjusting. They have not had the experience of being genuinely stuck and finding their way through. That experience is not a side effect of learning computing. It is the mechanism by which computing is learned.

⚠️

When we assign AI without designing for visible thinking, we are not just making assessment harder. We are removing the conditions under which the most durable and significant learning occurs.

Getting Started: Three Design Questions

Before assigning any major project, ask yourself three questions.

🧭

1. What thinking do I actually want to see?Name the specific thinking moves from Ritchhart’s list that the assignment should require. Use the Fink-Ritchhart chart to check which Fink dimensions those moves activate. If Human Dimension, Caring, or Learning How to Learn are absent, you have identified your highest-risk area for offloading.

2. Where will that thinking show up in the student’s work?If the answer is “in the final product,” reconsider the design. The final product is where AI performs best. The thinking needs a dedicated, structured space: a routine checkpoint, an annotation layer, a decision log, an oral defense.

3. How will you know it is genuine?Look for specificity. Genuine thinking produces idiosyncratic responses: a named moment of confusion, a connection no one else in the class would make, a question that only makes sense given this student’s prior experience. Generic fluency is the signal that the thinking may have been skipped.

Use the Project Zero Thinking Routine Toolbox to select a routine that fits your course context. It is free, searchable by purpose, and includes facilitation guidance. In most cases, a well-chosen routine adds less than fifteen minutes to the student’s workload and gives you far more information than the artifact alone.

📥

Free resources to go with this post

Fink-Ritchhart Interactive Chart — hover and filter to audit any assignment

Connect-Extend-Challenge Template — discipline-specific prompts for CS, engineering, and HCI

Process Depth Rubric — for assessing how students think, not just what they submit

The goal is not to make every assignment a reflection exercise. It is to build enough visibility into your course design that you can actually see whether the learning you care about is happening.

Because if you cannot see the thinking, you cannot teach it.

And in the age of AI, if you cannot see it, your students may have already learned to skip it.

What thinking routines are you already using in your courses, or curious about adapting for a technical context? Share your approach or questions in the comments.

Beyond Bloom’s: What Fink’s Taxonomy Means for Computing Courses, AI and Assessments

If you have been in higher education for more a while, you have probably encountered Bloom’s Taxonomy: Remember, Apply, Analyze, Evaluate, Create. If you are new or need a quick refresh; review this brief primer.

The cognitive hierarchy that tells you whether you are asking students to think deeply or just recall facts.

Bloom’s is genuinely useful. But L. Dee Fink, in his 2013 book Creating Significant Learning Experiences, noticed something important. Bloom’s only addresses one dimension of how humans learn. It tells you how cognitively demanding a task is. It says almost nothing about whether students will care about it, connect it to anything real, or know how to keep learning on their own after the course ends.

For computing and STEM faculty specifically, this gap has real consequences. We spend enormous energy designing cognitively rigorous assessments and then wonder why students who passed the exam still struggle to function in an internship. Or why a technically strong student falls apart in a team environment. Or why a graduating senior freezes up when asked to learn a new framework on their own.

Bloom’s tells you how hard the thinking is. Fink’s tells you whether it matters to the person doing the thinking.

The Six Dimensions of Fink’s Taxonomy

Fink’s taxonomy is not a hierarchy. It is an interactive web where every dimension strengthens the others. Here is what each one looks like in the context of a computing or STEM course.

DimensionWhat It MeansIn a Computing CourseFoundational Knowledge (FK)Understanding and remembering key concepts, facts, and principlesData structures, algorithm analysis, language syntaxApplication (AP)Critical thinking, creative thinking, practical skills, managing projectsImplementing algorithms, debugging, system designIntegration (IN)Connecting ideas across subjects, disciplines, and life contextsSeeing how OS concepts relate to security; connecting theory to production codeHuman Dimension (HD)Learning about oneself and others, including identity, perspective, and collaborationCode review etiquette, equity in technical interviews, accessibility awarenessCaring (CA)Developing new feelings, interests, and values; becoming genuinely investedCaring about software quality, open-source ethics, end-user impactLearning How to Learn (LL)Metacognition, self-direction, and inquiry skills; becoming a self-improving practitionerReading documentation, developing a debugging mindset, knowing when to ask for help

Why This Matters Right Now

Here is the uncomfortable truth about generative AI and course design. AI is extraordinarily good at Foundational Knowledge. It can explain recursion, walk through a sorting algorithm, or produce syntactically correct code on demand. If your assessments primarily target FK, students do not need to engage with the material. They can simply delegate the work.

But AI cannot learn to care about software quality on your student’s behalf. It cannot develop their debugging mindset. It cannot give them the experience of genuinely connecting theory to a problem that matters to them personally. The upper dimensions of Fink’s taxonomy, specifically HD, CA, and LL, are exactly where human learning is irreplaceable. They are also exactly where most computing assessments underinvest.

The AI Audit

For each of your major assessments, ask yourself: which Fink dimensions does this assignment actually require the student to engage? If you only see FK and AP, and especially if you only see FK, you have found your highest-risk assignment for AI substitution. That is your starting point for redesign. A good place to start is by auditing the verbs you are already using. The Computing Verb Atlas lets you search any verb and immediately see which Bloom’s level and Fink dimensions it activates, making the audit process much faster. [Open the tool.]

Practical Examples: Fink in a CS Course

The Difference One Question Makes

Consider a standard data structures assignment: “Implement a binary search tree with insert, delete, and search operations.”

This prompt targets Foundational Knowledge and Application. It misses Integration, Human Dimension, Caring, and Learning How to Learn entirely. It is also almost entirely delegatable to AI.

Now add one question: “Describe a real system you use daily that likely relies on a tree structure. How does your implementation compare to what you would expect in production? What surprised you?”

That one addition requires Integration (connecting BST theory to the real world), Human Dimension (drawing on the student’s own experience), Caring (investment in a system they actually use), and Learning How to Learn (comparing a learning exercise to production reality). AI can generate plausible-sounding text in response to that question. But the student still has to have had the experience to write something genuine.

Caring in a Systems Course

Caring does not mean students have to love your subject. Fink defines it as developing new interests, values, or feelings, including professional values. In a software engineering course, Caring might look like this: Does the student give any thought to writing readable code? Do they consider the next person who will maintain their work? Are they forming a genuine perspective on open-source licensing?

These are not soft skills. They are what separates a developer from a professional.

Learning How to Learn in Every Course

The LL dimension is the most underrepresented in computing curricula and arguably the most important one for long-term career success. The useful life of a specific technology stack is measured in years. The ability to independently pick up a new one is what sustains a career over decades.

What does LL look like as an assessment? It might be a reflection on which resources a student used to solve a difficult problem and why they chose those resources. It could be a post-mortem where students analyze their own debugging process. It can be as simple as asking students to document what they tried before asking for help, making their problem-solving process visible rather than just its final output.

The goal is not students who have learned your course. It is students who know how to keep learning after it ends.

A Note on Integration

Integration is the dimension most likely to shift how a student sees your discipline altogether. It is the moment when a student realizes that the graph algorithms from their CS course are the reason their navigation app works. Or that the ethics discussion in their intro course was not a detour but actually a preview of every technical decision they will make professionally.

Adding Integration to an assignment rarely requires a redesign. It often requires one additional prompt: “How does this connect to something outside this course?” The specificity of the answer will tell you more about a student’s genuine understanding than the code they submitted.

Getting Started: The Fink Audit

Before the next post, try this exercise. Take your next major assignment and map it against the six dimensions. Which dimensions does it genuinely require? Which are completely absent? Then ask yourself: what is the smallest possible change that would add one missing dimension without increasing your grading burden?

References

Anderson, L.W. and Krathwohl, D.R. (Eds.). (2001). A taxonomy for learning, teaching, and assessing. Longman.

ACM Committee for Computing Education in Community Colleges (CCECC). Bloom’s for Computing: Enhancing Bloom’s Revised Taxonomy with Verbs for Computing Disciplines (draft report).

Fink, L.D. (2003). Creating significant learning experiences. Jossey-Bass.

Don't Judge Me: How I Used Claude & the Real Housewives to Learn to Program

Don't Judge Me...

How I Used Claude and the Real Housewives

to Learn How to Program

Let me set the scene.

It's late. I'm supposed to be writing something important. Instead, I'm watching Porsha drag Kenya Moore across my screen, not on Bravo, but inside an interactive network visualization I built myself. In my browser. Using actual code.

I know. I know.

But hear me out, because what happened between me and a D3.js network graph and the entire Real Housewives franchise might just change how you think about learning to program.

I've Been "About to Learn to Code" for Years

I have a Ph.D. I teach faculty how to design courses. I study how people learn. And for years (embarrassingly many years) I could not make programming stick.

I took Python. I understood it in the moment. Two weeks later? Gone. I took a JavaScript tutorial. Same story. I'd get excited, do a few lessons, and then life would intervene and I'd lose the thread entirely.

The problem wasn't the content. It was that I had no reason to keep going. No project. No stakes. No drama.

Enter: Claude.

The Conversation That Started Everything

I was sitting in on a meeting with a colleague (a genuinely brilliant person who builds interactive educational tools for fun) and he was moving fast. He showed me a network visualization he'd built using something called D3.js and an AI coding tool called Codex. Characters from Game of Thrones were bouncing around the screen, connected by lines showing their relationships. You could drag them. Zoom in. Explore.

It was maybe 300 lines of code. He built it by describing what he wanted in plain English and letting the AI write it.

I thought: I could do that. But with something I actually care about.

And what do I care about? Among other things: the Real Housewives of Atlanta, New York, Beverly Hills, New Jersey, and Potomac. Don't @ me.

What I Actually Did (It's Simpler Than You Think)

I opened Claude and typed, almost verbatim, this prompt:

"Create a simple D3.js network visualization as a single HTML file. Show connections between Real Housewives. I want to be able to drag the nodes around."

That's it. That was the whole prompt.

What came back was a single HTML file with NeNe, Kenya, Porsha, Bethenny, Teresa, and fifteen of their castmates mapped out in a glowing, color-coded, draggable constellation. Each franchise had its own color. Each relationship (friendship, feud, alliance, family, frenemy) had its own line style. Hover over a node and you get the tea. Literally. A little bio pops up.

I double-clicked the file. It opened in my browser. And I sat there for a solid minute just dragging housewives around my screen like a woman who had finally arrived.

But Here's the Part That Actually Made Me Learn

I didn't stop at "ooh, pretty." I started asking questions. Which part of this code is actually about the Housewives, and which part is the instructions for drawing it?

Claude showed me. The answer was two sections: the cast list (readable by any human: you could add a new housewife just by copying a line) and the drama list (relationships, who connects to who). Everything else (the bouncing physics, the color gradients, the hover effects) was D3.js doing the heavy lifting.

Once I understood that, I started poking around. I changed a color. I added a housewife. I broke something, showed Claude the error, and it fixed it while explaining what went wrong. Then I tried to fix the next thing myself before asking.

What I Actually Learned (For Real This Time)

- What a JavaScript library is and why D3.js exists (it's a pre-built toolbox so you don't have to write the physics of bouncing dots from scratch)

- What nodes and links are in network visualization (dots = things, lines = relationships, that's it)

- How an HTML file can contain CSS, HTML, and JavaScript all in one place

- What it means to declare a variable and store data in it

- Why GitHub Pages lets you turn a file on your computer into a real website anyone can visit

None of this came from a tutorial. It came from having a specific thing I wanted, building it, and then being curious about how it worked.

The Bigger Thing I'm Sitting With

I study learning. I've spent 15+ years thinking about how people acquire knowledge, what makes it stick, what makes it fall off. And I have to be honest with myself: the approach I just described (interest-driven, project-based, AI-assisted) is exactly what the research says works best.

It's just-in-time learning. You need to know something, so you learn it. You have a reason to remember it because it's connected to something you made.

What AI tools like Claude have changed is the barrier to entry. That's not cheating. That's smart instructional design.

Should You Try This?

Yes. Especially if you've been "about to learn to code" for a while.

Think of something you actually care about (a TV show, a research interest, your family tree, your favorite athletes) and ask Claude to build a network visualization of it. Drag the nodes around for a minute. Then ask: "Which part of this code is actually my data?" That question will take you further than any tutorial I've tried.

And Andy Cohen, if you're reading this... can we talk about a collaboration? I'm thinking interactive franchise maps, relationship timelines, reunion drama visualized in real time. I have the vision. I have the AI. I have the love for the franchise.

I'm just not built to be a Housewife myself. The taglines alone would stress me out.

I Put Two AI Tools to the Test on the Same Task: Here's What I Learned

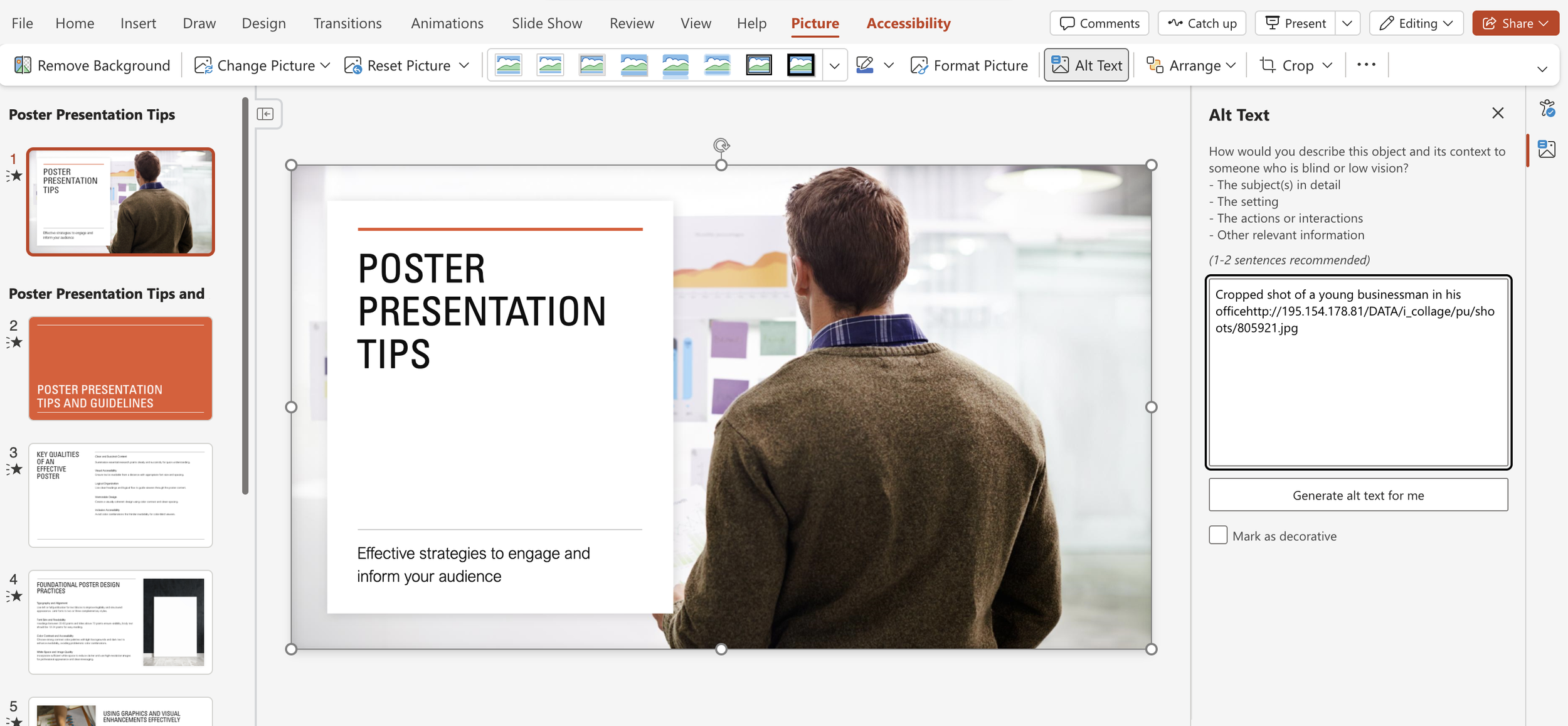

I had a PDF of workshop slides that needed to become a polished, accessible PowerPoint.

Instead of rebuilding it from scratch myself, I decided to test two different AI tools on the same task: Microsoft Copilot and Claude. Same source file. Same accessibility requirements. Very different results. Here is what happened with each one, what I learned from the comparison, and what it means for anyone using AI tools in their teaching and design workflows.

Part 1: What Happened with Microsoft Copilot

The Prompt

"Redesign this content into a PowerPoint presentation that is both aesthetically pleasing and strictly follows Section 508 Accessibility guidelines. Crucial Requirement for Titles: Every slide must use the official PowerPoint 'Title' placeholder for its heading. Do not use floating text boxes for slide titles. The title text must be visible in 'Outline View' to ensure screen readers can identify the slide's purpose. Layout & Design: > * Use the standard 'Title and Content' layout for all body slides. * Ensure a high contrast ratio between text and background. * Mark all decorative background elements or repetitive shapes as 'Decorative' within the file metadata. * Provide descriptive Alt Text for all functional icons or data-driven images. Please generate the .pptx file now using Indiana University's official palette.

What Copilot Did

Behind the scenes, Copilot parsed the PDF content, performed a web search to pull IU's official hex codes (Crimson #990000 and Cream #EDEBEB from IU's branding resources), then built the slide deck using Python. The entire process, reading the prompt, searching for brand colors, parsing the PDF, generating the file, took only a few seconds.

That's the part that's genuinely impressive. A task that would take 30 to 60 minutes to complete manually, longer if you're applying accessibility standards carefully, was done almost instantly.

Where It Got Complicated

The IU colors were retrieved correctly, but they didn't fully appear in the slides. This is worth understanding because it's not a bug. It's an architectural limitation.

Copilot built the file programmatically, which means it set colors at the object level rather than through the slide master. No theme-level color changes, no branded backgrounds, no full visual overhaul. It also made a deliberate accessibility trade-off: IU Crimson as a background color risks low contrast with dark text, so Copilot defaulted to safer neutral styling instead. When accessibility and branding competed, it chose accessibility. That's technically the right call, even if the result isn't visually what you'd expect.

The takeaway is that understanding why Copilot made those choices matters just as much as knowing how to use the tool. The foundation was there and could be refined from that starting point. When I updated the prompt to have IU Colors integrated; it added crimson rectangles that had to be tagged as decorative images. Click Iu_poster_tips.pptx to see the slide deck.

Part 2: What Happened with Claude

The Prompt

I uploaded the same PDF and gave Claude a single instruction:

"Redesign this so that it is a PowerPoint that is aesthetically pleasing and meets Accessibility guidelines as defined by Section 508 of the Rehabilitation Act." Then I waited. (NOTE: If you choose to use Claude to create a PowerPoint, make sure it is not being used for content that contains sensitive material such as student data, etc.)

What Claude Did

Click title_abstract_writing_accessible_v2 1.pptx to see the slide deck.

Within about two minutes, Claude read all 16 pages of content, selected a cohesive teal and gold color palette, and rebuilt all 13 slides from scratch as a fully formatted .pptx file, complete with icon cards, numbered sections, a title slide, and consistent layouts throughout.

Honestly, I liked what it produced. The design was clean, intentional, and visually stronger than the original PDF. The color choices were bold but readable, the layouts were structured, and it felt like something I would actually use in a workshop setting.

The Process and the Gotchas

After reviewing the slides, I made one follow-up request: mark any shapes that weren't icons as decorative. Claude updated the file. But when I opened the PowerPoint and ran the built-in Accessibility Checker, it flagged 125 items still needing attention.

Sample Accessibility Assistant

All 125 items were decorative shapes: squares, rectangles, and design elements that carry no content meaning. Claude's update had been a step in the right direction, but PowerPoint's built-in checker caught what remained.

The fix itself was fast. I clicked through the flagged items, identified which six were actual images needing alt text, wrote descriptions for those, and let PowerPoint automatically mark the remaining 119 as decorative. Less than a minute of cleanup.

One More Snag: The Title Compliance Issue

When I uploaded the finished file to Microsoft 365 and ran the Accessibility Checker again, my titles were flagged as non-compliant. The issue? Claude had used floating text boxes instead of official PowerPoint placeholder elements. The Accessibility Checker didn't recognize them as proper slide titles.

To fix it properly, I rebuilt the presentation using python-pptx, which let me force the use of Title and Content and Title Slide layouts assigning each title a proper placeholder identifier the Accessibility Checker actually recognizes. I also resolved a z-order issue where background design elements were occasionally rendering on top of the text.

I'm currently working on translating this coding-based fix back into a simple prompt-based workflow for anyone who doesn't want to touch Python. I'll share that when I have it worked out.

Putting It Together: What the Comparison Reveals

Both tools got me to a usable, accessible PowerPoint faster than I could have done it manually. But they got there differently, and those differences matter depending on what you're trying to accomplish.

Copilot is fast, integrated directly into Microsoft 365, and appropriately cautious. It prioritized accessibility compliance over branding, which is technically correct even if the visual result felt incomplete. If you're working within a campus ecosystem where Microsoft tools are the standard, Copilot is a solid starting point; just go in knowing you'll likely need to refine the visual branding manually afterward.

Claude made bolder design choices and produced a more visually polished output from a single prompt. The teal and gold palette, the icon cards, the numbered sections; those were all Claude's decisions, and they were good ones. The accessibility gaps it left behind were real but fixable in minutes using PowerPoint's own tools.

Neither tool alone was the complete solution. Both made changes to the original text. In this case it was an improvement, but you should check to make sure your text retains your intended meaning. Together AI generation followed by a quick accessibility checker review. The process that would have taken hours manually was done in under 10 minutes.

The Practical Takeaway

Running the accessibility checker at the end should be standard practice regardless of what tool you use to generate your file. Think of it as a spell check for inclusion. The checker doesn't just catch AI mistakes; it catches human ones too. Build it into your workflow and it becomes fast and routine.

AI tools are genuinely useful for this kind of work. They're not plug-and-play replacements for design judgment or accessibility expertise, but they dramatically lower the time cost of getting to a first draft that's already doing most of the right things. That's worth paying attention to